Sometimes I want a lot of detail...

The big advantage of using AI when creating images is - firstly - the large amount of details, which are - secondly - drawn at high speed. Of course there are differences between the AI. For example, the first Dall-e was simpler than Dall-e2, and could generate less detail. Midjourney and the Stable Diffusion algorithm raised the bar even higher than those two, both in terms of detail and quality of the image. That also means that you can go for an older AI if you consciously choose to make a simple image.

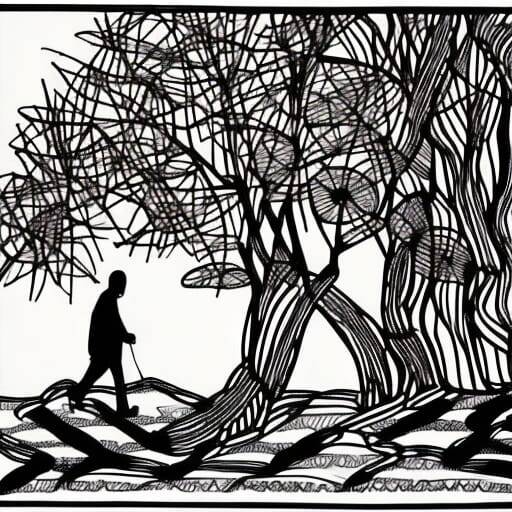

Here's an example of a line drawing using Stable Diffusion v2.1. You can see that the AI really likes to add detail.

The full specification is:

- "Continuous line art, man walking in Japanese garden, single line art, black and white, single line, minimalism, abstract art - Weight:3

- "photographic, rendered, 3D, texture" - Weight:-3

- "ugly, tiling, poorly drawn hands, poorly drawn feet, poorly drawn face, out of frame, extra limbs, disfigured, deformed, body out of frame, blurry, bad anatomy, blurred, watermark, grainy, signature, cut off, draft" - Weight:-0.3

Seed: 2273717043

Overall Prompt Weight: 50%

Model Version: Stable Diffusion v2.1

Sampling method: K_LMS

CLIP Guidance: NONE

... and sometimes I don't

So with Stable Diffusion you need quite a bit of text to say that you don't want much.

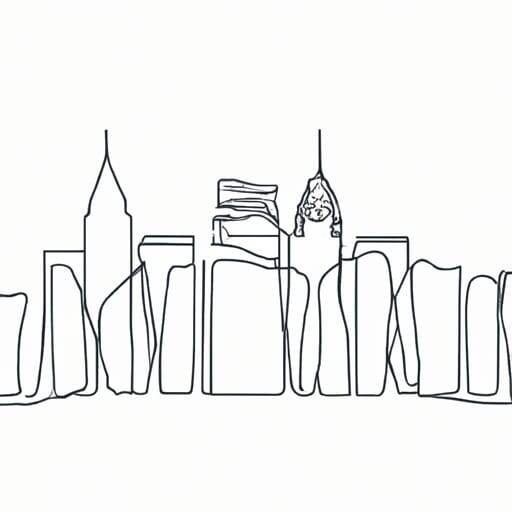

If you want it simple (and I mean really simple) you'll be done much faster with Dall-e2 than with Stable. Both are available on NightCafe Creator, making it easy to try. Here is another example of a simple creation.

Text Prompts: "Continuous line art Japanese garden, single line art" - Weight:1

Even the number of specifications is much less with Dall-e2.

The only pity is that I find the result just a little too meager. In this case I miss the details that make it recognizable as a Japanese garden. The tree is good, but hill, stones and fence don't convince me.

So I have a problem, but there are solutions for this. We can take advantage of a feature of the Stable Diffusion AI, which allows us to use any image as a start. (The default) 50% noise is added to ensure that the result does not become a copy.

Option 1: from Dall-e2 to Stable

We give Dall-e2 the text prompt:

"Continuous line art Statue of Liberty plus New York skyline, single line art, contour lines"

The first picture is the result. We give this as input to Stable version 2.1 with the text prompt: "Continuous line art Statue of Liberty plus New York skyline, single line art, contour lines"

This does not quite give the desired result, but the differences are clear.

Again, but now with "New York Manhattan Skyline" in the prompt. First Dall-e2, then Stable v2.1.

My preference is the second version, although the first is also interesting to look at. It's a difference in style. Dall-e2 stays closer to its assignment and is better in that sense. Stable gives more detail and a suggestion of depth. Tastes can of course differ.

Option 2: further development

We will stick to the Statue of Liberty for a while. Once we've found a seed value and a text prompt that yield reasonable results - meaning here: not too much detail - we can build on those results. So here again - but with only Stable Diffusion v2.1 - a succession of pictures.

"Continuous line art, Statue of Liberty New York and Skyline of New York, contour drawing, single line art, single line, minimalism"

Weight:3

"Ukiyo-e, hieroglyphs, ink drawing, kintsugi, comic art, manga, storybook illustration, woodcut, architecture"

Weight:0.5

"photographic, rendered, 3D, texture"

Weight:-3

From image two, every subsequent image is generated with its predecessor as starting image. View the results.

Halfway through, the seed value was switched to get more change. That is a random change, so success is not guaranteed, but by using the previous image as a start, the drawing remains simple.

The fifth picture made me the most happy, but tastes can differ here too.

The addition of the red flame does not quite fit in with the assignment, but it fits well with reality where the torch is made of shiny material.

What is also striking is that the AI secretly adds detail that is not immediately noticeable: the paper is also drawn. The background is not stark white, as it would be with Dall-e2. It's even getting greener and greener, as if it was inspired by the Statue of Liberty itself.

Not a serious problem. This is easily removed in an image editing program such as Krita or Photoshop.

Asking for line art served two purposes for me. The first was 'just' investigating to what extent the AI was capable to do this. The second was to continue with these line art in Krita to add color myself.

I see a lot of possibilities 😁.

Reactie plaatsen

Reacties